Contents

The Four Hardest Problems in Chat Design

Designing a chat system at WhatsApp's scale is one of the most common system design interview questions — and the one where candidates lose the most points. Requirements and estimation were covered in Design WhatsApp. Today we go deep into the four problems that interviewers specifically probe for: Real-Time Messaging Architecture, Message Ordering, Offline Sync, and Multi-Region Replication.

Real-Time Messaging Architecture

WebSocket vs Long Polling: Why WebSocket Wins

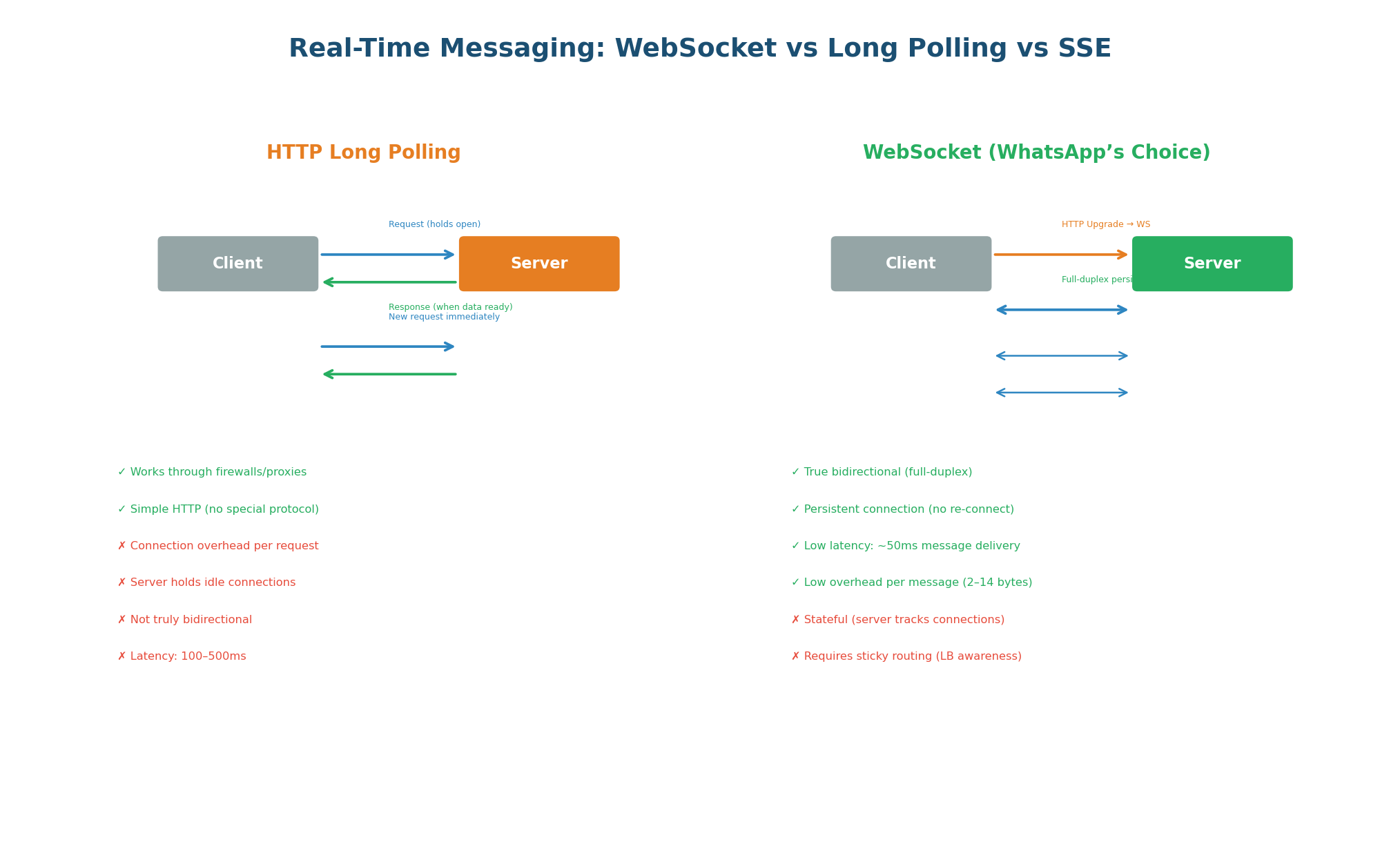

Real-time messaging requires the server to push messages to the client the instant they arrive — the client cannot be polling. Three approaches exist: short polling (wasteful), long polling (acceptable), and WebSocket (optimal). WhatsApp, Slack, Discord, and every major chat system use WebSocket because it provides true bidirectional, full-duplex communication over a single persistent TCP connection.

Server-Sent Events are server-to-client only. Chat requires client-to-server messaging too (sending messages). SSE would require a separate HTTP POST channel for sends, creating two connections per user. WebSocket handles both directions on one connection. Never suggest SSE for a chat system in an interview.

WebSocket Gateway: Internal Architecture

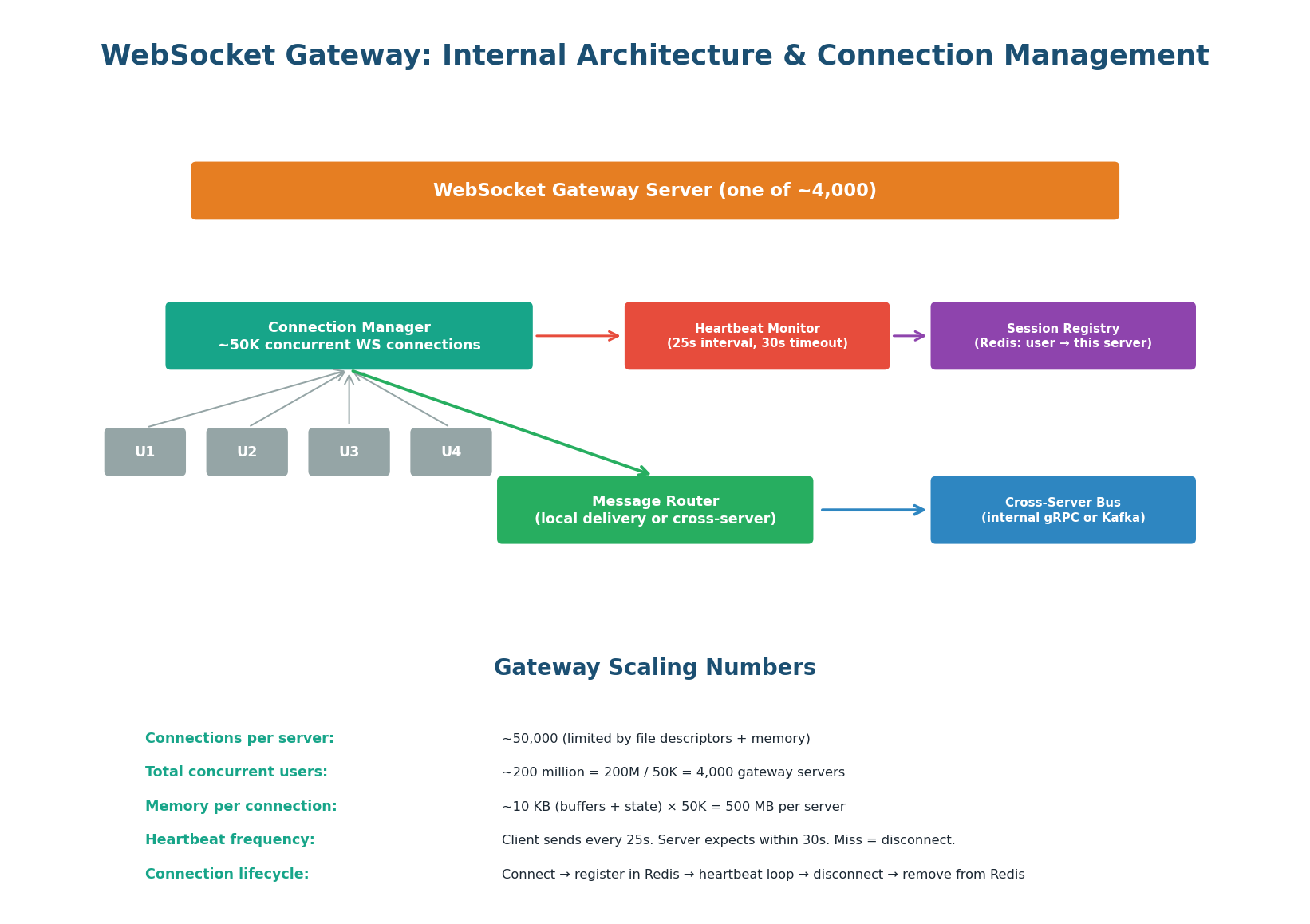

The WebSocket Gateway is the most critical component in a chat system. Each gateway server manages approximately 50,000 concurrent WebSocket connections. With 200 million concurrent users, you need approximately 4,000 gateway servers. Each server runs four internal components working in concert.

| Component | Responsibility | Key Detail |

|---|---|---|

| Connection Manager | Tracks all active WebSocket connections in memory | In-memory map: user_id → socket_fd. ~50K entries per server. |

| Heartbeat Monitor | Detects disconnected clients | Sends ping frame every 25 seconds. No pong = client dropped = clean up session. |

| Session Registry Updater | Writes user → server mapping to Redis | On connect: SET session:{user_id} gateway-42 EX 300. On disconnect: DEL. |

| Message Router | Delivers messages locally or forwards cross-server | If recipient on same server: direct delivery. Else: gRPC to correct gateway. |

"Each WebSocket gateway manages 50K connections. When a connection arrives, the Connection Manager maps the user_id to the socket file descriptor in memory. Simultaneously, the Session Registry writes user_id → gateway_server_id to Redis with a 5-minute TTL (refreshed by heartbeats). The Heartbeat Monitor pings every 25 seconds — no pong means the connection is dead, we clean up Redis, and the client will reconnect. The Message Router checks if the recipient is local (direct delivery) or remote (gRPC to their gateway)."

Cross-Server Message Routing: The Key Challenge

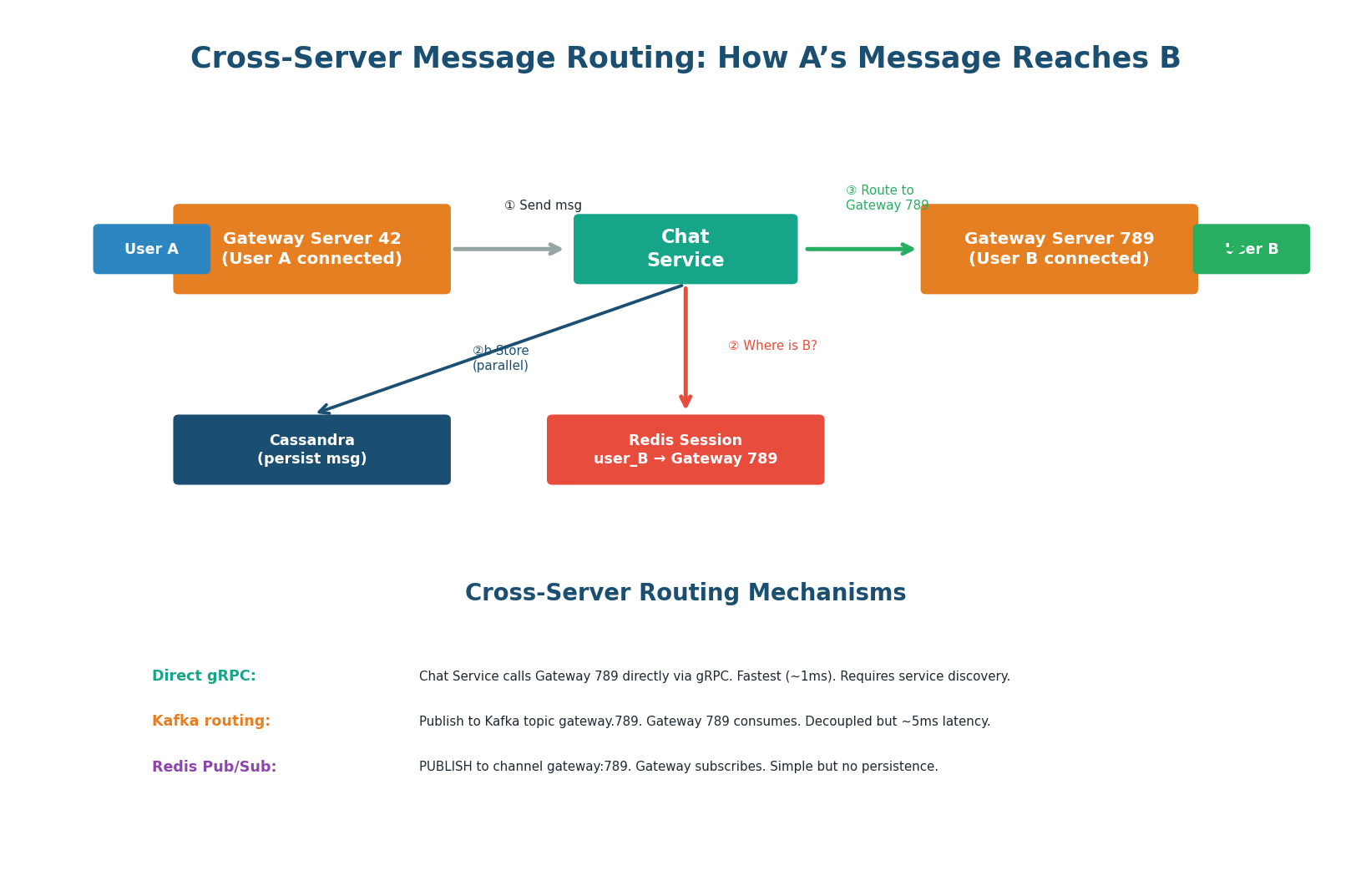

The central challenge of distributed chat: User A is connected to Gateway Server 42. User B is connected to Gateway Server 789. How does a message from A reach B? The answer is the Redis session store: a mapping of user_id → gateway_server_id. The Chat Service looks up B's gateway, then routes the message directly to Gateway 789 via gRPC (~1ms) or through an internal Kafka topic (~5ms).

The Redis session store (user_id → gateway_server_id) is the most important component in a distributed chat system. Without it, you'd need to broadcast messages to all gateways — O(n) instead of O(1). Every strong-hire answer mentions this lookup by name. If an interviewer asks "how does the message get to the right server?", this is the answer.

Message Ordering — The Problem and Its Solution

Why Ordering Is Harder Than It Looks

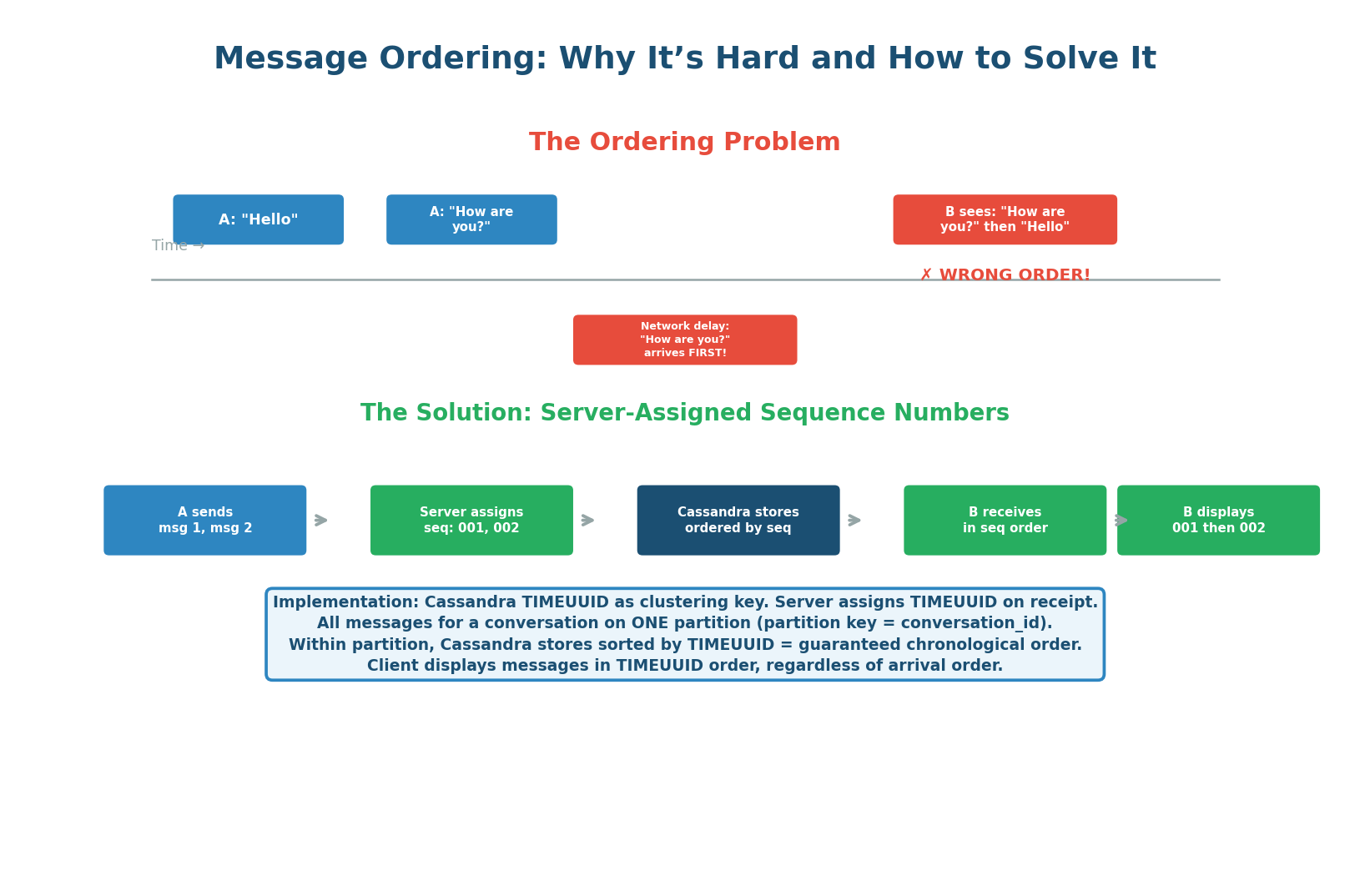

Message ordering seems trivial but is one of the hardest problems in distributed messaging. Due to network jitter, packet retransmission, or load balancer routing, "How are you?" might arrive at the server before "Hello." If the system stores and delivers messages in arrival order, User B sees them out of order.

Server-Assigned TIMEUUID: The Correct Fix

The server (not the client) assigns a monotonically increasing sequence number to each message as it arrives. In Cassandra, this is a TIMEUUID — a UUID that encodes the server's timestamp with microsecond precision plus a random component to break ties. The TIMEUUID is used as the clustering key in Cassandra, which stores messages sorted by this key within each partition.

Client clocks are unreliable: User A's phone might be 5 minutes ahead. User B's phone might be 3 minutes behind. Client-assigned timestamps would produce ordering chaos. Server-assigned TIMEUUIDs use the server's NTP-synchronized clock, providing a consistent time reference within each conversation. This is a non-negotiable design choice.

"When a message arrives at the Chat Service, I assign it a TIMEUUID — this is a Cassandra-native UUID type that encodes the current server timestamp at microsecond precision, plus a random component to break ties within the same microsecond. I store this as the clustering key in Cassandra's messages table, partitioned by conversation_id. Cassandra physically sorts rows by clustering key, so all messages in a conversation are stored in chronological order automatically. I never trust client-side timestamps — client clocks drift and can be manipulated."

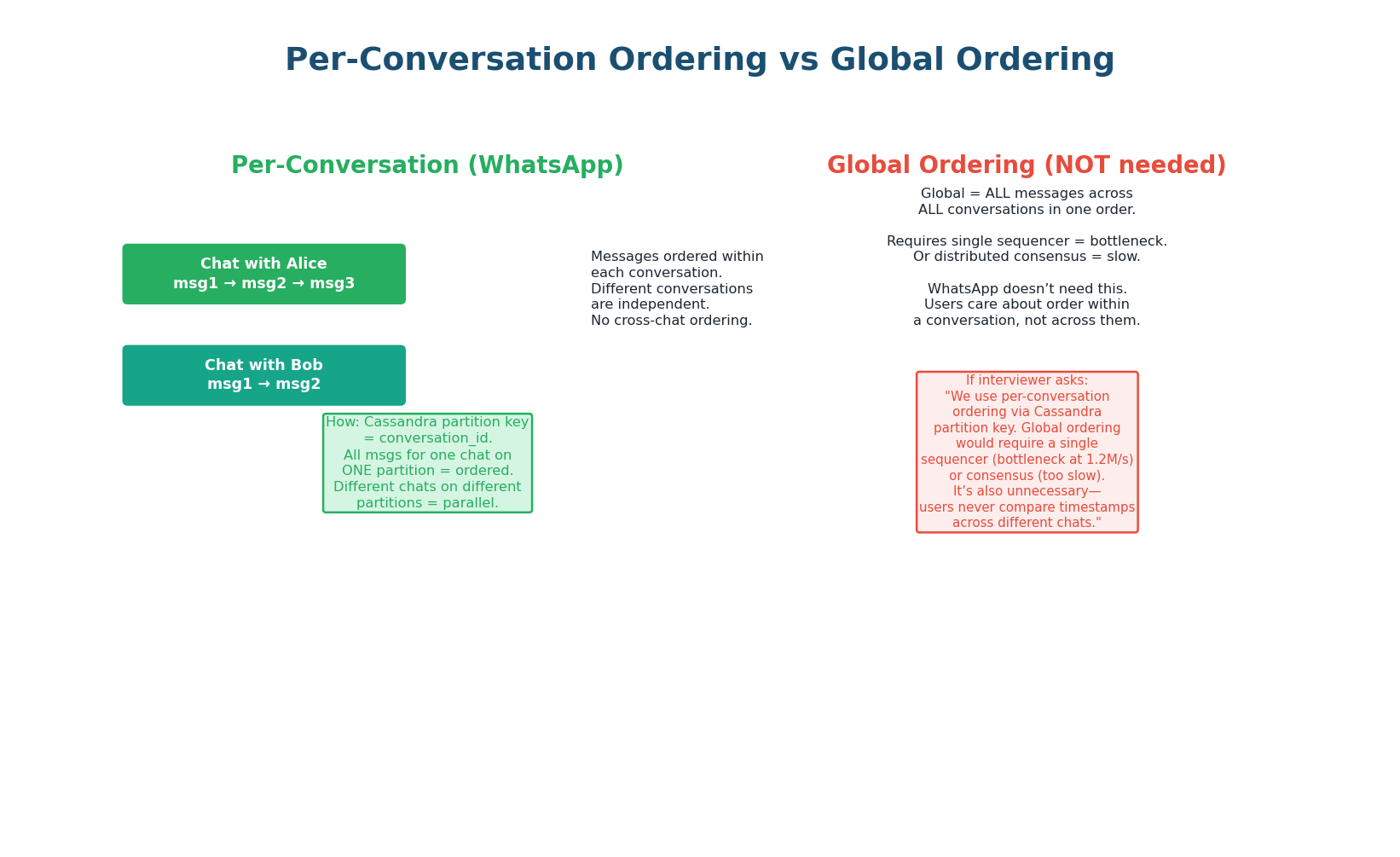

Per-Conversation Ordering vs Global Ordering

WhatsApp needs only per-conversation ordering — users compare message order within a single chat, never across different conversations. Global ordering would require a single coordination point across all 1.2 million messages/second — a fatal bottleneck. Cassandra's partition key (conversation_id) naturally provides per-conversation ordering with no coordination overhead.

| Approach | Mechanism | Scalability | Use When |

|---|---|---|---|

| Per-conversation | Cassandra partition: conversation_id, clustering: TIMEUUID |

Horizontal — each partition independent | Chat (WhatsApp, Slack, Discord) ✓ |

| Global ordering | Single Kafka partition or Zookeeper counter | Single bottleneck — max ~500K/sec | Financial ledgers, audit logs |

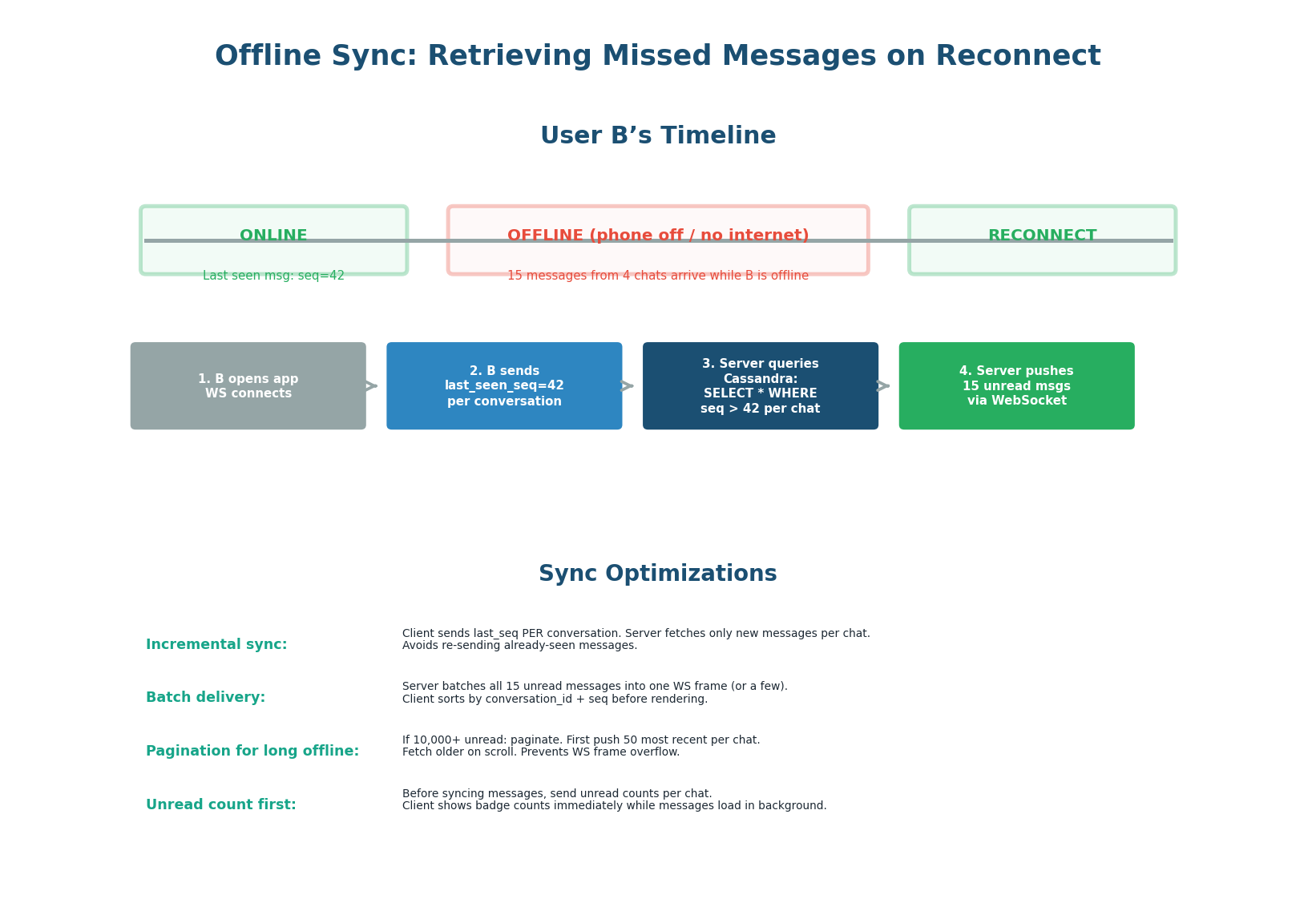

Offline Sync — Retrieving Missed Messages on Reconnect

The Offline Sync Flow: 6 Steps

While offline, messages continue arriving and are stored in Cassandra. When the user reconnects, the system must efficiently synchronize all missed messages without re-sending messages the user has already seen. The key mechanism is per-conversation cursors — the client tracks the last message_id it has seen in each conversation.

- User B opens the app. The client establishes a WebSocket connection to the nearest gateway server.

- The client sends a sync request:

{user_id, last_sync_timestamp, per_conversation_cursors}. Each cursor is themessage_idof the last message B has seen in each conversation. - The server computes the delta:

SELECT * FROM messages WHERE conversation_id = ? AND message_id > last_seen_cursor - The server first pushes unread counts per conversation (instant badge numbers), then streams actual messages in batches of 50, most recent first.

- The client stores messages in its local SQLite database, updates cursors, and sends delivery acknowledgments.

- If the user typed messages while offline (outbox), the client sends them now. The server deduplicates by client-assigned

message_id.

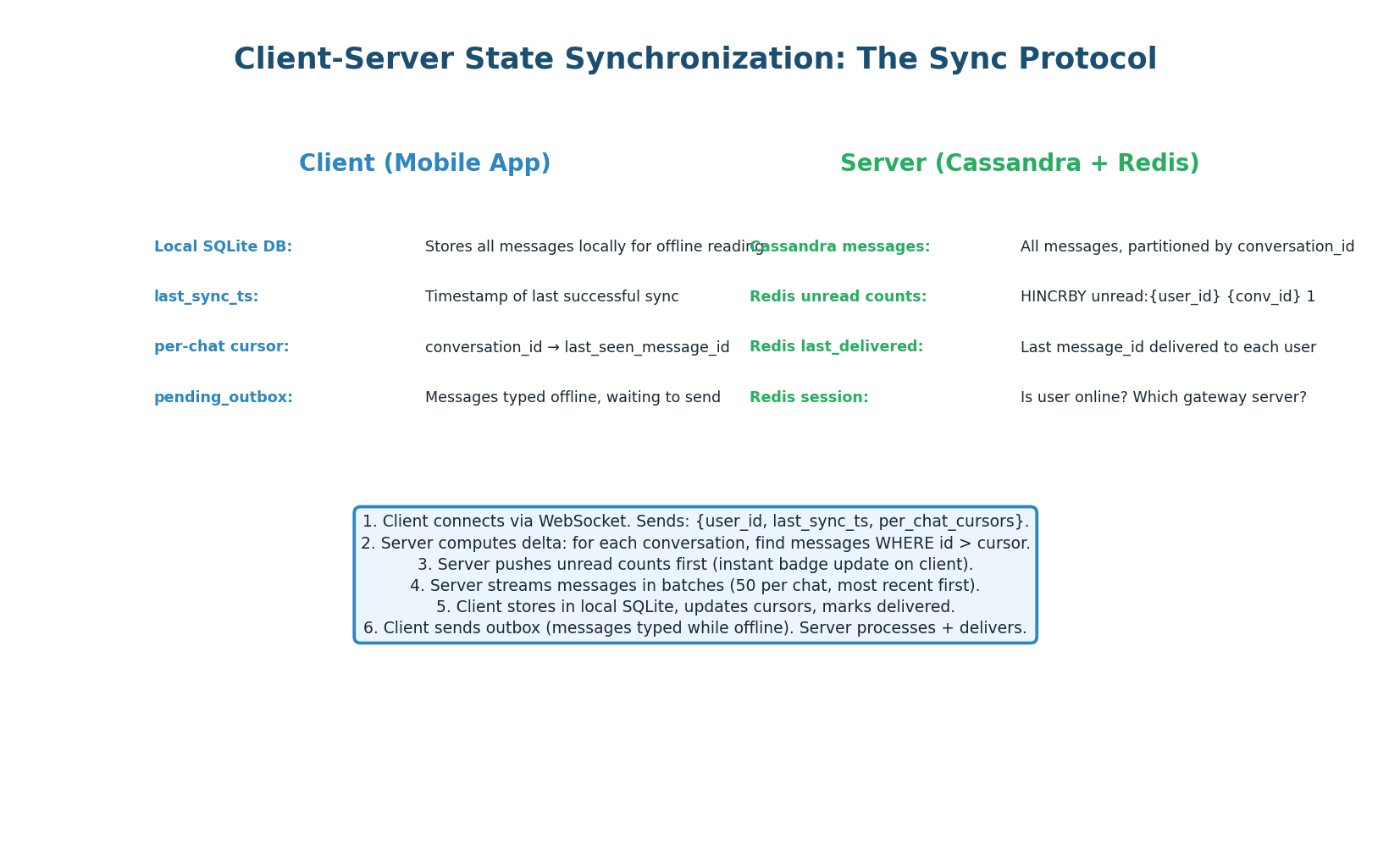

Client-Server State Synchronization

| State | Location | Details |

|---|---|---|

| Message history | Client: SQLite | Server: Cassandra | Cassandra is source of truth. Client is a cache of recent messages. |

| Per-conversation cursors | Client: local storage | Last seen message_id per conversation. Drives delta query on reconnect. |

| Pending outbox | Client: SQLite queue | Messages typed while offline. Sent on reconnect. Server deduplicates by client UUID. |

| Unread counts | Server: Redis | HINCRBY unread:{user_id} {conv_id} 1 on every message arrival. Pushed first on sync. |

| Session state | Server: Redis | user_id → gateway_server_id. Cleared on disconnect, set on connect. |

If a user was offline for 3 days with 2,000 missed messages across 40 conversations, dumping all messages at once would block the UI. Streaming in batches of 50, most-recent-first, means the user sees the newest messages immediately while older messages load in the background. The unread count badge is pushed before any messages, so the UI is responsive from the first render.

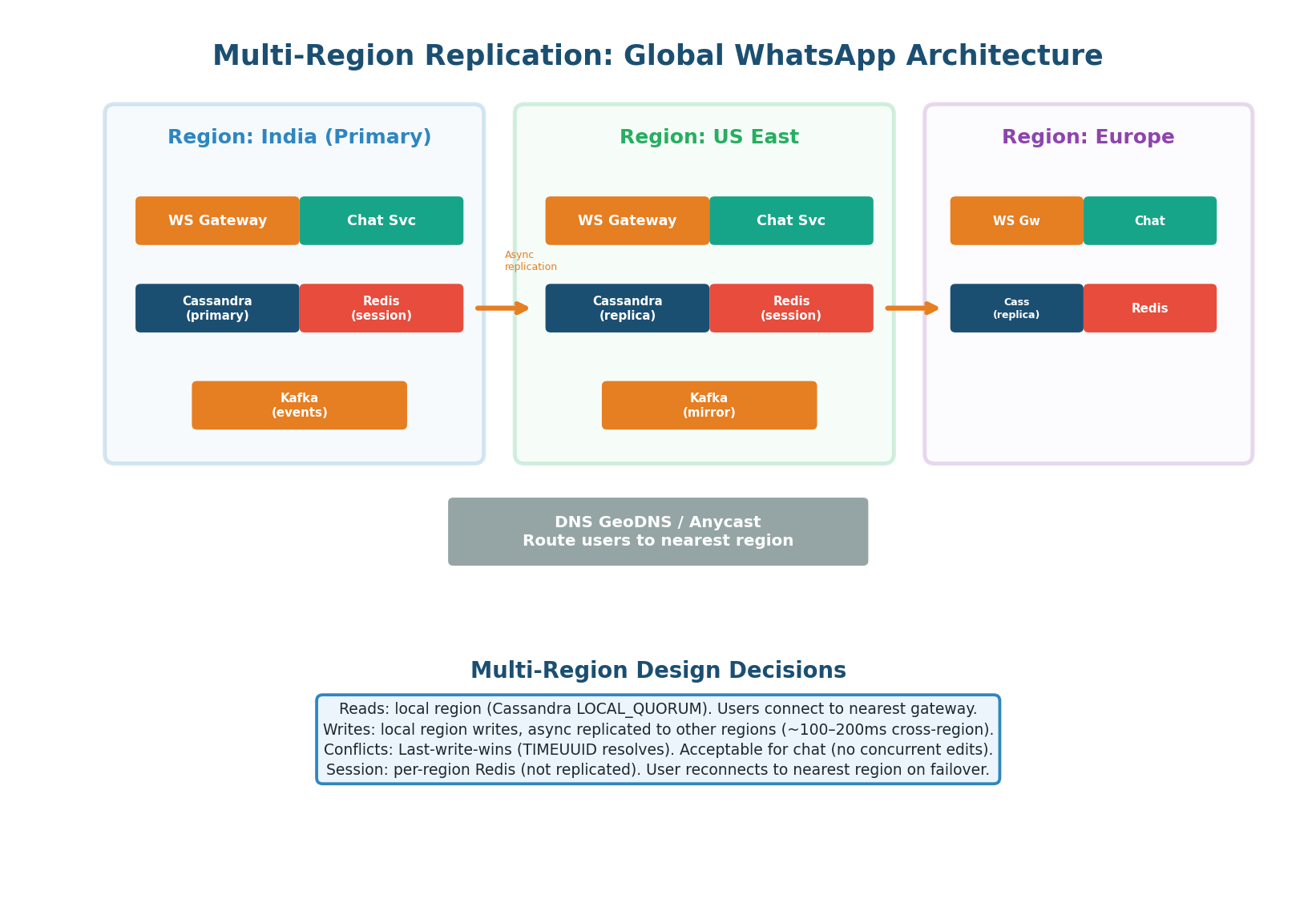

Multi-Region Replication — Global Architecture

Three-Region Deployment

WhatsApp serves 2 billion users across every country. Multi-region deployment places infrastructure in 3+ regions. Messages within a region take ~100ms. Cross-region messages require ~200ms for the inter-region hop. Each region contains a complete, independent stack — no single-region dependency for normal operations.

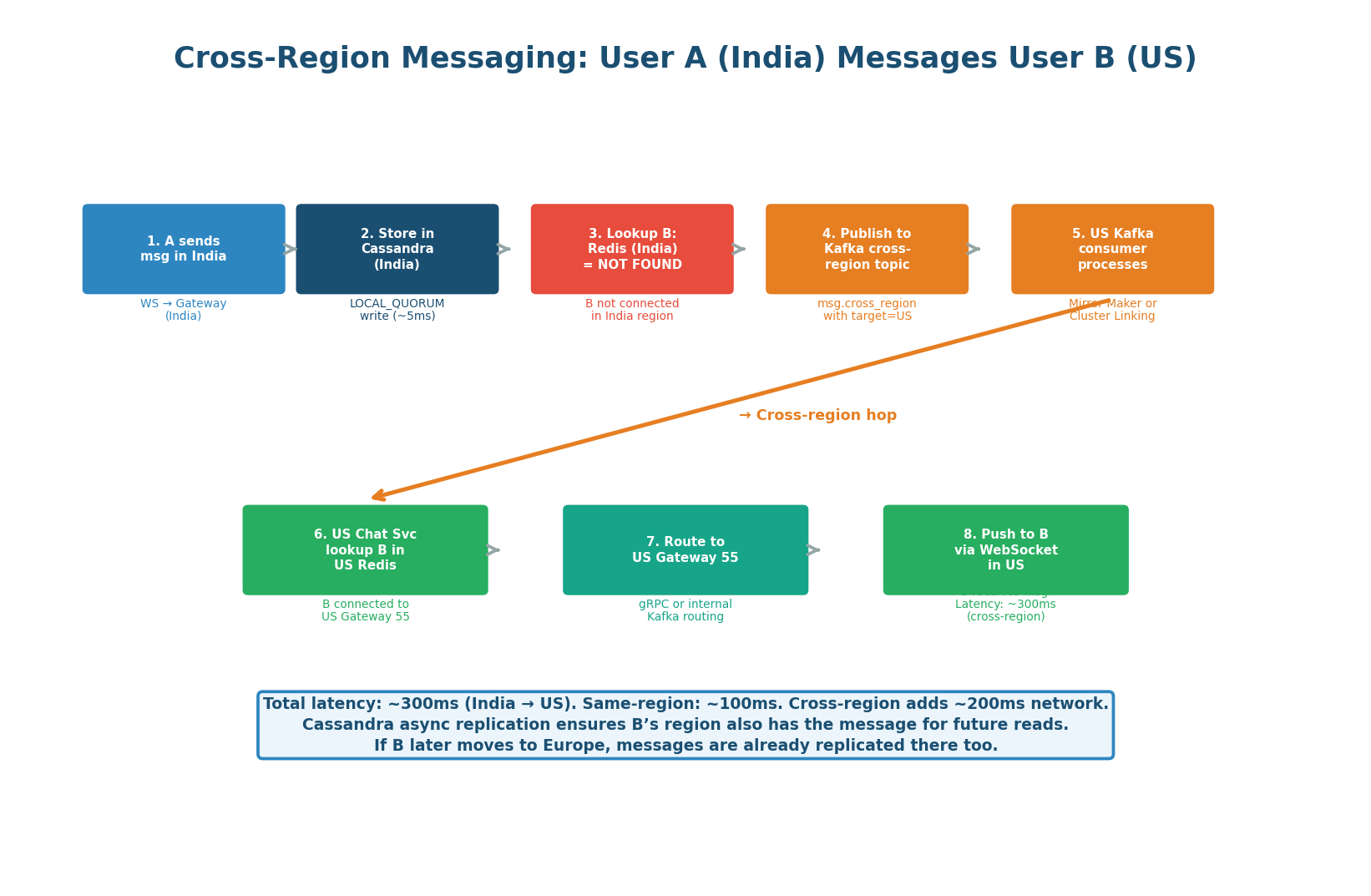

Cross-Region Message Flow: 8 Steps, ~300ms

- Message stored in India's Cassandra using LOCAL_QUORUM (~5ms)

- Chat Service checks India Redis — User B not connected locally (no session found)

- Message published to Kafka cross-region topic in India

- Kafka MirrorMaker replicates to US Kafka cluster (~100–200ms)

- US Chat Service consumes message from Kafka

- US Chat Service looks up User B's session in US Redis → finds Gateway 789

- Message routed via gRPC to Gateway 789 (~1ms)

- Gateway 789 delivers to User B's WebSocket connection. Total: ~300ms

| Design Decision | Choice | Reason | When to Switch |

|---|---|---|---|

| Read consistency | LOCAL_QUORUM | All reads are local. No cross-region read latency needed for chat history. | Never — cross-region reads add 200ms latency with no benefit |

| Write replication | Local + async replication | Cassandra async replication for durability. Kafka for real-time cross-region delivery. | Sync if financial data requiring global consistency |

| Conflict resolution | Last-Write-Wins (TIMEUUID) | Safe because chat messages are append-only — no updates. | CRDT if mutable data (e.g., presence, shared docs) |

| Session store | Per-region, NOT replicated | Avoids global session replication complexity. User is online in one region at a time. | Never — cross-region session replication adds 200ms per connect |

12-Point Design Checklist

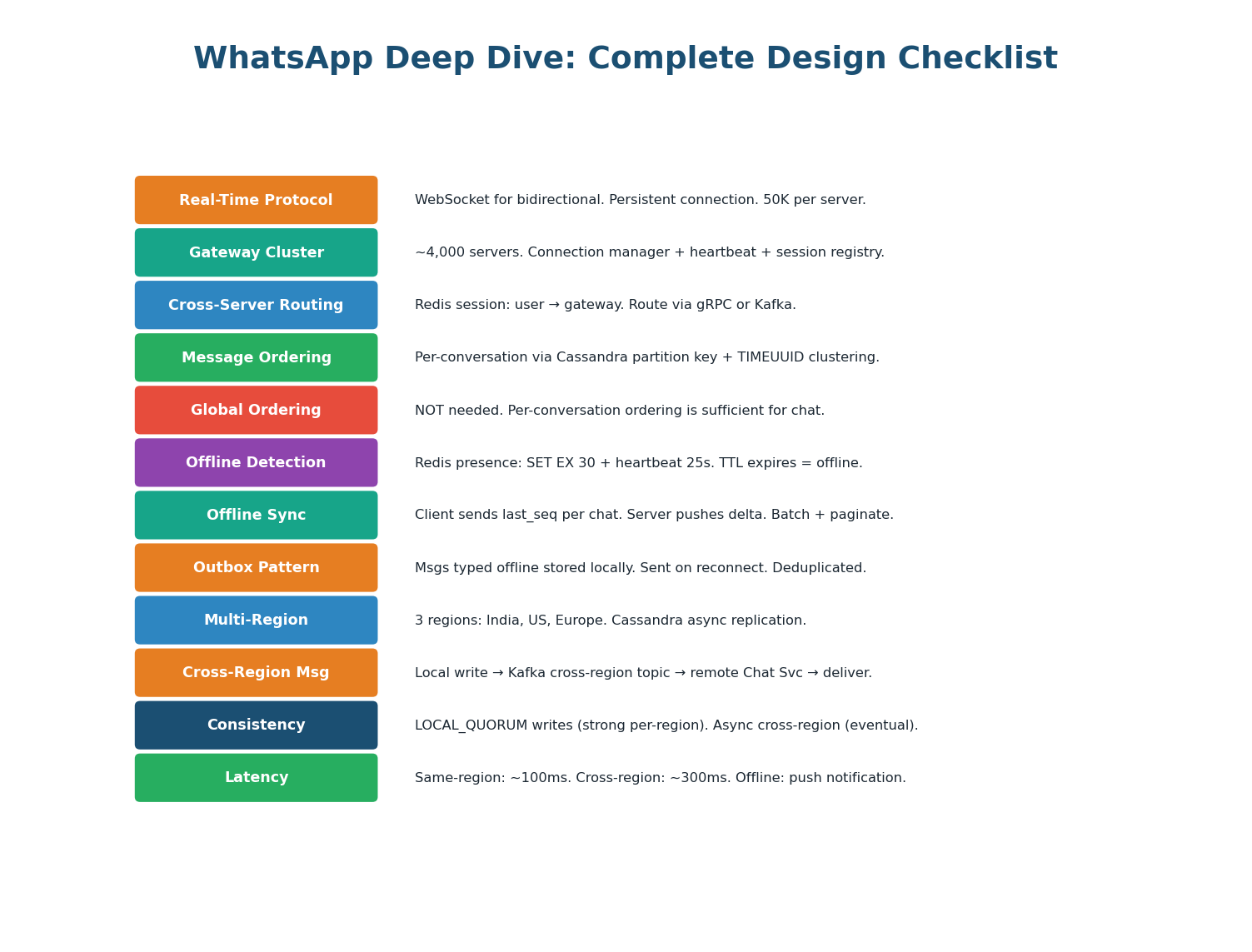

- Real-Time Messaging: WebSocket (not SSE, not polling). 50K connections/server. ~4,000 servers for 200M concurrent users. Sticky LB routing required. Gateway runs Connection Manager + Heartbeat Monitor (25s ping) + Session Registry updater + Message Router.

- Cross-Server Routing: Redis session store maps

user_id → gateway_server_id. Chat Service lookups Redis, routes via gRPC (~1ms) or Kafka (~5ms). This is the #1 insight interviewers look for. - Message Ordering: Server-assigned TIMEUUIDs (never client timestamps). Cassandra: partition key =

conversation_id, clustering key = TIMEUUID. Per-conversation ordering only — global ordering is a bottleneck at 1.2M msgs/sec. - Offline Sync: Per-conversation cursors on reconnect. Delta query:

message_id > last_seen_cursor. Unread counts pushed first (Redis HINCRBY). Messages streamed in batches of 50, most recent first. Outbox pattern + client UUID deduplication. - Multi-Region: 3 regions, full independent stack each. LOCAL_QUORUM reads and writes. Async Cassandra replication. Kafka MirrorMaker for cross-region real-time delivery. Session store per-region (not replicated globally). LWW via TIMEUUID for conflict resolution.

Want to Land at Google, Microsoft or Apple?

Watch Pranjal Jain's free 30-min training — the exact GROW Strategy that helped 1,572+ engineers go from TCS/Infosys to top product companies with a 3–5X salary hike.